Three Hundred Sixty Billion Dollars and Basically Zero

Big Tech's 2025 AI spending produced no measurable economic impact. The historical record says that's exactly what should happen.

I keep a running list of numbers that don’t belong in the same sentence. The kind that make you read them twice, do rough math on the back of something, and then sit quietly for a minute.

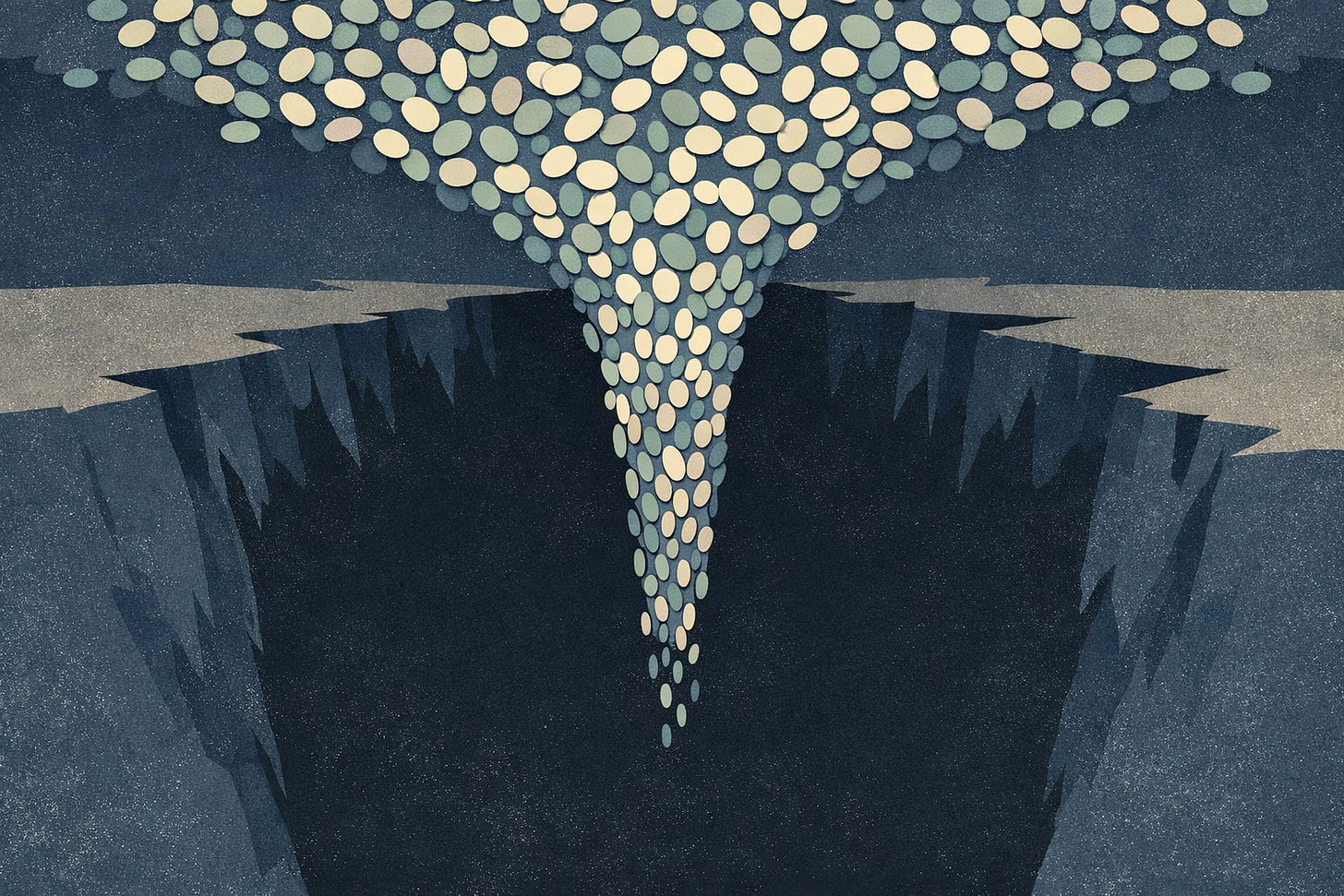

Here’s the latest pair: Amazon, Alphabet, Meta, and Microsoft collectively pledged over $360 billion in AI-related capital expenditure for 2025. Goldman Sachs chief economist Jan Hatzius looked at the incoming macroeconomic data and described AI’s actual impact on U.S. economic growth so far as “basically zero.”

Three hundred sixty billion dollars. Basically zero.

Apollo’s chief economist Torsten Slok put a finer point on it: “AI is everywhere except in the incoming macroeconomic data.” A Denmark-wide study that linked ChatGPT adoption directly to administrative earnings records found essentially zero aggregate effects on earnings or hours worked through 2024. An NBER survey of nearly 6,000 senior executives found that nine out of ten reported zero impact on employment or productivity from AI over the prior three years. The Census Bureau’s BTOS survey showed that only about 18% of U.S. firms used AI at all by end of 2025, and a mere 6.1% used it in actual production.

Those numbers should bother anyone paying attention. They bother me, and I’ve spent the last two years telling engineering leaders that AI adoption is a strategic priority.

But before we reach for the obvious conclusion, there’s another set of numbers that deserve the same weight.

ChatGPT reached 900 million weekly active users by early 2026. AI coding tools went from $550 million to $4 billion in a single year. Claude Code went from zero to $2.5 billion in annualized revenue in roughly nine months. AlphaFold solved the 50-year protein folding problem and won a Nobel Prize. AI-designed drug molecules are hitting Phase I clinical trials at success rates roughly double the historical baseline.

So we have a technology that produces Nobel Prizes and zero GDP. That generates billions in product revenue while burning through those billions even faster. That half the developer population uses daily while nine out of ten executives say it hasn’t changed anything.

This is the contradiction I want to sit with, because I think most of the commentary gets it wrong by resolving the tension too quickly. The bears say it’s a bubble. The bulls say we’re early. Both are reaching for comfort. The honest position is harder: both of those things might be true at the same time, and the historical record strongly suggests they are.

Warren Street Wealth Advisors estimated the AI sector’s investment-to-revenue gap at roughly six to seven times. For context, the dot-com bubble’s ratio was about four times. The railroad boom’s was about two times. We are spending at a pace that exceeds both of those episodes relative to what the spending is actually generating.

And a meaningful chunk of the AI revenue that does exist is circular. Microsoft invests in OpenAI. OpenAI spends on Azure. Amazon invests in Anthropic. Anthropic spends on AWS. The money moves in a loop that flatters the revenue lines of companies that are also the biggest spenders. I’ve seen this pattern before in enterprise software, where vendors buy each other’s products to hit partnership targets. It looks like a market until you trace where the cash actually goes.

OpenAI reached roughly $25 billion in annualized revenue by early 2026 but is projected to burn $9 billion this year and $17 billion next year. Deutsche Bank estimates $140 billion in cumulative losses from 2024 to 2029. Profitability isn’t expected until 2030. Anthropic, at roughly $30 billion in annualized run rate, projects positive cash flow no earlier than 2027 or 2028. These aren’t struggling startups. These are the category leaders, and they’re hemorrhaging money at a scale that would be considered catastrophic in any other industry.

So yes. The spending looks unhinged.

And I think that framing, by itself, misses something important.

Robert Solow wrote in the New York Times Book Review on July 12, 1987: “You can see the computer age everywhere but in the productivity statistics.” Computing capacity had increased a hundredfold in the 1970s and 1980s. Labor productivity growth had slowed from over 3% annually in the 1960s to roughly 1% in the 1980s. The paradox persisted for another decade. The macro gains didn’t arrive until the mid-to-late 1990s, a lag of ten to twenty years from widespread adoption.

We’ve been here before. Almost exactly here.

Paul David, the Stanford economist, traced the same pattern with electrification. Factory owners in the 1890s replaced their steam engines with electric dynamos and kept the same belt-and-pulley layouts. They gained almost nothing. The real productivity breakthrough came in the 1920s, when factories were redesigned from scratch around distributed electric motors. That redesign took a generation. Not because the technology wasn’t ready, but because the organizational imagination wasn’t ready. People couldn’t see the new shapes that the new capability made possible. They kept cramming the new thing into the old architecture.

I watched something similar happen with cloud migration between 2012 and 2018. Teams that “moved to the cloud” by lifting and shifting their existing applications gained almost nothing. Sometimes they spent more. The gains came later, when organizations rebuilt their systems and their teams around what cloud actually made possible: elastic scaling, managed services, deployment as a continuous activity rather than a quarterly event. The technology was available for years before the organizational model caught up.

Erik Brynjolfsson at Stanford has formalized this as the “Productivity J-Curve.” His argument, published in the American Economic Journal, is that general-purpose technologies initially show lower measured productivity because firms are making massive, unmeasured investments in reorganization, training, and new processes. The payoff comes later, creating a J-shaped curve. His research found that adjusting for these unmeasured intangible investments, true total factor productivity was 11.3% higher than official measures by the end of 2004. In a February 2026 Financial Times piece, Brynjolfsson claimed U.S. productivity jumped approximately 2.7% in 2025, nearly double the prior decade’s 1.4% average, as evidence the “harvest phase” has begun.

Other economists are not convinced. Daron Acemoglu, the 2024 Nobel laureate, calculated that AI’s total factor productivity gains will likely be no more than 0.53 to 0.66 percent over ten years. His reasoning is blunt: only about 5% of the economy can be profitably affected by current AI, because only 20% of tasks are exposed to it, and only a quarter of those are cost-effective to automate. That’s roughly a tenth of Goldman’s own long-term projection of 7% global GDP growth from AI.

Brynjolfsson and Robert Gordon at Northwestern have a formal wager on LongBets.org about whether productivity growth will average over 1.8% annually through 2029. Gordon, the author of The Rise and Fall of American Growth, argues that the great inventions of 1870 to 1970 were one-time transformations and that AI’s impact will be gradual, not sudden. Two serious economists, betting real money, disagreeing by an order of magnitude on the same question. That tells you something about how wide the uncertainty actually is.

There are counterarguments that I take seriously.

The adoption speed is genuinely unprecedented. A St. Louis Federal Reserve study found generative AI reached a 39.5% adoption rate among U.S. adults after just two years, compared to 19.7% for PCs after three years and 20% for the internet after two years. By August 2025, that number hit 54.6%. Over 1.2 billion people used AI tools within three years of ChatGPT’s launch, faster than the internet, PCs, or smartphones.

Part of the reason is structural. Every previous general-purpose technology required building physical infrastructure from scratch. Electricity needed grids. Cars needed roads and gas stations. The internet needed broadband. AI needs a browser. It sits on top of infrastructure that already exists, which compresses the adoption timeline in a way that has no real historical precedent. Harvard economist David Deming pointed this out explicitly: generative AI is built on top of PCs and internet access as base layers.

The developer ecosystem is also orders of magnitude larger. GitHub hosts over 150 million developers. Roughly 1,000 to 2,000 new open-source AI models are uploaded to Hugging Face daily. API-first distribution means AI can be embedded into existing products without hardware installation. The iteration speed, with new frontier models shipping every four to six weeks, is incomparable to any physical technology’s improvement cycle.

I find these arguments compelling. And I still don’t think they resolve the tension.

What history keeps showing is that the bottleneck is never the technology. It’s the organizational adaptation. Getting humans and institutions to reorganize around a new capability takes about a generation regardless of how fast the capability itself improves. David showed this with the dynamo. We saw it with computing. We’re watching it with AI right now.

A telling example: when ATMs spread in the 1960s and 70s, everyone assumed bank tellers would vanish. Instead, ATMs reduced cost per branch, so banks opened more branches, and total U.S. bank tellers actually increased from 1980 to 2010. Economist James Bessen documented this. The technology didn’t eliminate the work. It changed the economics of the work, which changed the shape of the industry in ways nobody predicted. Developers using AI complete 21% more tasks and merge 98% more pull requests. That sounds like a productivity revolution until you realize it’s generating vastly more software, not less demand for programmers. The Jevons Paradox, first observed with coal in 1865, keeps showing up.

So where does that leave a leader trying to make real decisions right now? Not in 2030, not when the J-curve resolves, but this quarter, with a budget and a team and a board that has opinions?

I think the honest answer is uncomfortable. The $360 billion is probably not rational at the individual company level, but it might be rational at the portfolio level. Each company is making a bet it can’t afford to lose. The cost of being wrong about investing is a few ugly quarters of write-downs. The cost of being wrong about not investing is irrelevance. When every competitor is spending, the calculus tips toward spending even if you privately believe the payoff is years away. I’ve seen this logic play out in enterprises debating cloud migration, in airlines investing in digital operations, in banks building mobile platforms. The individual ROI case was often weak. The strategic risk of falling behind was the real driver.

That doesn’t make the spending wise. It makes it explicable. There’s a difference.

For teams and leaders closer to the ground, I’d focus on two things. First, treat AI adoption as an organizational design problem, not a technology deployment problem. The companies that gained from cloud were the ones that changed how they built and operated, not just where they ran their servers. The companies that will gain from AI are the ones that redesign workflows, incentives, and team structures around what AI makes possible. Buying licenses and running pilots is the equivalent of bolting a dynamo onto a belt-and-pulley factory.

Second, watch the micro-level evidence more than the macro numbers. The macro stats will stay near zero for a while because that’s what general-purpose technologies do. But the translation industry has already lost 40 to 70% of its human workforce at agencies. AI coding tools are a $4 billion market that didn’t exist two years ago. Drug discovery timelines are compressing from a decade to months for early phases. These are narrow, specific, measurable impacts. The question for your organization is whether any of those narrow impacts touch your value chain, and whether your competitors are adapting faster than you are.

Goldman says basically zero. History says that’s on schedule. The $360 billion says the people writing the checks believe the zero is temporary.

I think they’re probably right. I also think a lot of that money is going to burn before anyone proves it.