Ninety Percent of CEOs Say AI Changed Nothing. The Other Ten Percent Have a PR Team.

An NBER survey of 6,000 executives reveals a productivity gap that most boardrooms won't talk about honestly.

Late last year, I came across an article about a company’s AI strategy. Big claims. Millions in projected savings. A quote from their CEO about “changing how we build forever.” I read the whole thing. Then I pulled up their last two quarterly earnings calls and searched for the word “productivity.” It appeared once, in a boilerplate answer about operational efficiency. No numbers. No before-and-after. No specifics at all.

That gap between the press release and the earnings call is where a lot of the AI conversation lives right now.

The NBER surveyed nearly 6,000 senior executives and found that nine out of ten reported zero impact on employment or productivity from AI over the prior three years. Not “modest gains.” Not “early signs.” Zero. I wrote last week about the macro picture, the spending, the GDP numbers, the historical parallels. The economics are worth understanding. But the number that stays with me isn’t the $360 billion or the Solow paradox. It’s the nine out of ten. Because that’s not a macro statistic. That’s thousands of individual leaders looking at their own organizations and saying: nothing changed.

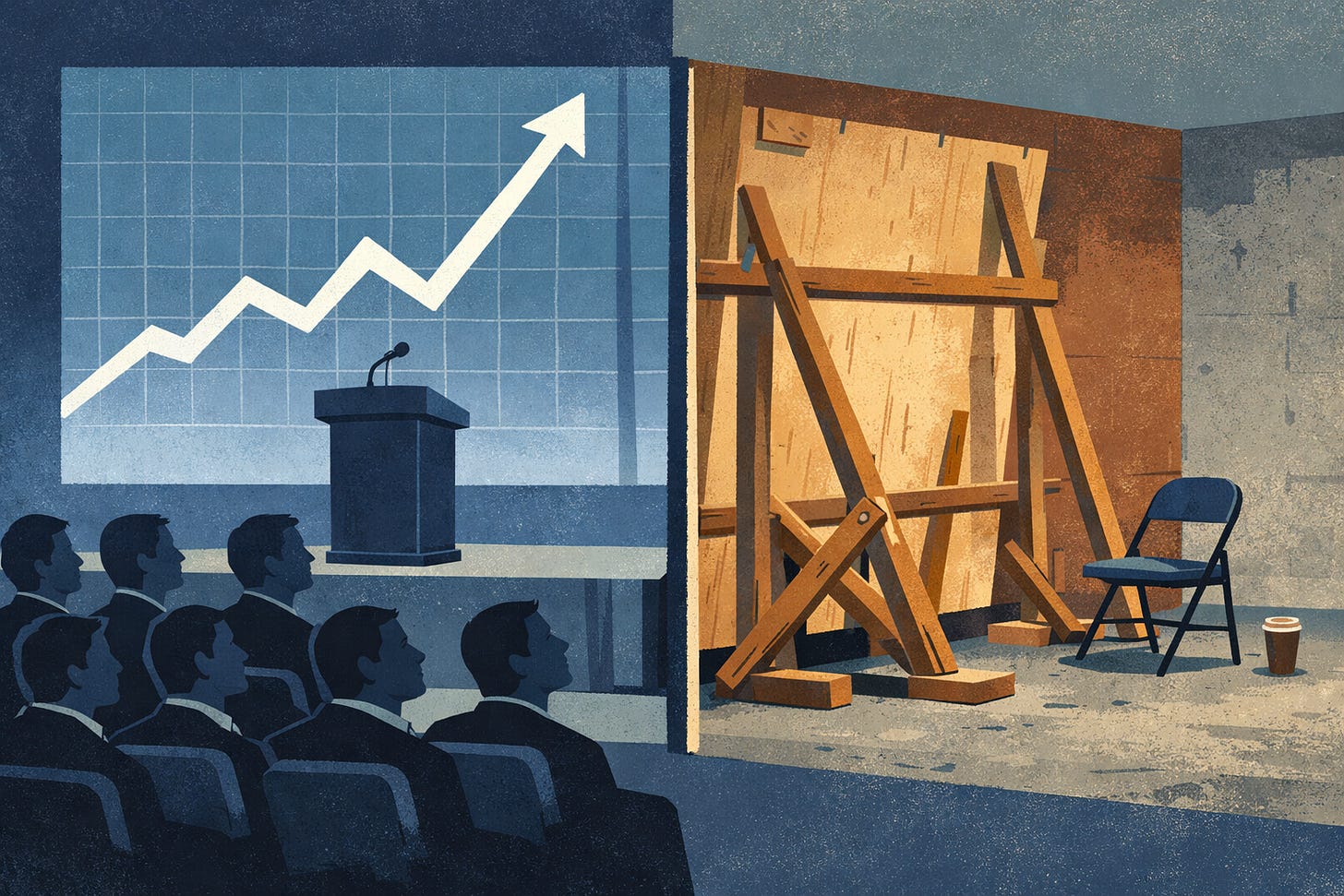

I’ve sat through enough industry conferences to recognize the pattern. A senior leader takes the stage. Slides with large percentages and upward arrows. A case study from one team, usually small, usually recent, usually cherry-picked because it worked. The audience nods. Everyone leaves feeling like they’re behind. Nobody asks: “What happened to the other 94% of your organization?”

McKinsey’s 2025 workplace research found that 62% of organizations report productivity increases of 25% or more from AI. Sounds impressive until you pair it with this: only 20% of engineering teams are actually using metrics to measure AI’s impact. Two thirds of companies are claiming results they haven’t instrumented. They’re reporting feelings, not findings.

I’ve done this myself. At a previous company, someone asked me in a quarterly review whether the AI tools were helping the team. I said yes, because a couple of engineers had told me they loved the coding assistant and their output had ticked up. I didn’t mention that most of the team was barely using it, or that we hadn’t set up any real measurement. I gave the answer the room wanted because the room wasn’t asking a real question. It was looking for confirmation that the investment was working.

That’s the thing about productivity claims in organizations. They flow upward through a series of rooms where the incentive is always to round up. An engineer says “it helps sometimes.” Their manager reports “positive adoption signals.” The director says “we’re seeing early gains.” By the time it reaches the C-suite deck, it’s a bar chart trending up and to the right with no error bars.

The LeadDev Engineering Leadership Report surveyed over 600 engineering leaders in 2025. Sixty percent said AI hadn’t meaningfully boosted team productivity. The majority reported only small gains. That’s a more honest number, and it comes from people close enough to the work to know what’s actually happening. But you won’t see that stat in a keynote.

I keep coming back to a question that I think matters more than whether AI “works.” The question is: what does it cost an organization to pretend something is working before it actually is?

The cost is specific and I’ve watched it accumulate across multiple organizations. Sprint commitments get padded because someone read a vendor white paper that promised 30 to 40% productivity gains under ideal conditions. Managers absorb the gap between those projections and what the team can actually deliver, quietly burning themselves out. Adoption drops off within the first month because nobody built time for the learning curve. And when delivery slips, the tools get blamed, which poisons the well for the actual adoption work that might have produced real results six months later.

I lived through a version of this with cloud migration. Between 2013 and 2016, company after company announced they were “cloud-first” while running 90% of their workloads on legacy infrastructure. The press releases came first. The architecture changes came years later, if they came at all. But that story had a specific ending: the companies that actually benefited were the ones that quietly reorganized without declaring victory before the work was done. The ones who announced the transformation before doing it got stuck performing progress instead of making it.

That’s what I see happening with AI in most organizations right now. Performance of progress. A dashboard that counts AI-assisted pull requests goes up, so it goes into the board deck. That’s not measurement. That’s decoration.

I think about the 10% of executives in that NBER survey who did report productivity impact, and I wonder how many of them would hold up under scrutiny. Some of them, probably. The ones who invested in workflow redesign, set clear expectations, measured before and after, and gave their teams time to adapt. Those organizations exist. I’ve seen a few.

The nine out of ten who said “zero” might actually be the more honest group. They looked at the numbers, couldn’t find the signal, and said so. In a business culture that rewards premature optimism about technology investments, admitting you spent money and can’t show results takes a kind of courage that doesn’t get celebrated at conferences.

What I’d rather see from leadership, including myself, is a simpler frame. We’re investing in AI because we believe the capability is real and the long-term cost of not investing is too high. We don’t have productivity data yet because we’re still learning how to use it well. That’s where we are. We’ll tell you when it changes.

No bar charts. No projected savings. No implying that the transformation already happened.

Nine out of ten executives told researchers the truth. The interesting question is whether any of them are saying the same thing to their boards.